Our Approach

Our approach

Key research questions

Key research questions

We set out to understand workers’ experience of AI in their jobs and possible opportunities to foster worker participation and voice in the processes of AI creation and deployment. Our key research questions were:

- How and why are AI and automation technologies changing workers’ tasks, coaching, and evaluation? What are workers’ reflections on the positives and negatives of those changes?

- How is workplace AI affecting different aspects of worker well-being (including purpose, meaning, and physical, emotional, and intellectual well-being)✳This report does not go into detail on financial well-being. Partnership on AI’s AI and Shared Prosperity Initiative and team addresses this important component of worker well-being in other publications, including Katya Klinova and Anton Korinek, “AI and Shared Prosperity,” in Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society, 2021, 645–51, https://doi.org/10.1145/3461702.3462619 Ekaterina Klinova, “Governing AI to Advance Shared Prosperity,” in The Oxford Handbook of AI Governance (Oxford University Press, 2022), https://doi.org/10.1093/oxfordhb/9780197579329.013.43 and Anton Korinek and Megan Juelfs, “Preparing for the (Non-Existent?) Future of Work,” NBER Working Paper Series, June 2022, 43 as articulated and valued by workers themselves?

- In what ways are workers currently participating in the creation and implementation of AI used in their workplaces? How much direct influence or decision-making power do they see themselves as having in these processes? Would they be interested in more ways to participate? Why or why not?

Research methods

Research methods✳Additional details on research methods can be found in Appendix 2

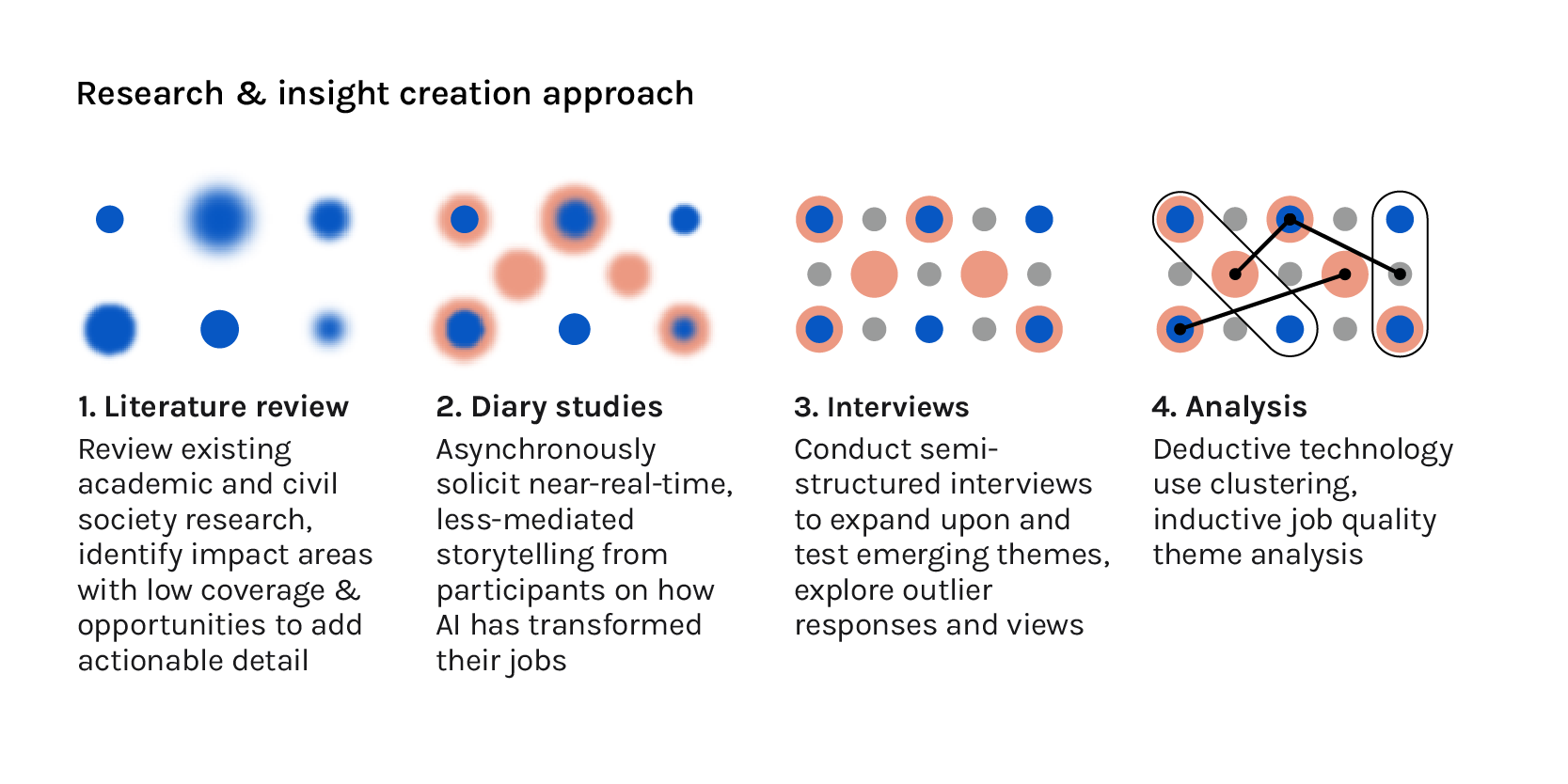

The findings of this report are grounded in a review of the existing research and two types of primary qualitative research we conducted with workers: diary studies and interviews. All primary research was conducted virtually due to the pandemic.

Site selection

Site selection✳Additional details on sites, included technologies, and participant demographics can be found in Appendix 1

AI’s transformation of work is global in scope. Its impacts within and across countries often follow deeply grooved paths of inequality created by past economic and political systems.Karen Hao, “Artificial Intelligence Is Creating a New Colonial World Order,” MIT Technology Review, accessed July 24, 2022, https://www.technologyreview.com/2022/04/19/1049592/artificial-intelligence-colonialism/Shakir Mohamed, Marie-Therese Png, and William Isaac, “Decolonial AI: Decolonial Theory as Sociotechnical Foresight in Artificial Intelligence,” Philosophy & Technology 33 (December 1, 2020), https://doi.org/10.1007/s13347-020-00405-8Aarathi Krishnan et al., “Decolonial AI Manyfesto,” https://manyfesto.ai/We conducted the primary research with three groups of workers, focusing on people holding individual contributor (rather than managerial) roles:

- Customer service agents in India

- Data annotators in sub-Saharan Africa

- Warehouse workers (e.g., pickers, packers, loaders) in the United States

We sought a range of geographies, industries, occupations, and skills. This multisite approach allowed us to explore diverse experiences on issues including:

- Whether there is something inherent to artificial intelligence as a technology (irrespective of geography, industry, and worker skills) in how it transforms work

- The economic opportunities and vulnerabilities associated with varying wage and income levels (between different occupations and geographies)

- Worker attitudes toward jobs and labor

- Labor market structures and near-term susceptibility to technological disruption by AI

- Managerial decision-making cultures

- Government interest in fostering local AI ecosystems

- Government interest and capacity to regulate AI’s impacts on workersOECD.AI (2021), powered by EC/OECD (2021). “Database of National AI Policies.” https://oecd.ai/en/dashboardsKofi Yeboah, “Artificial Intelligence in Sub-Saharan Africa: Ensuring Inclusivity.” (Paradigm Initiative, December 2021), https://paradigmhq.org/report/artificial-intelligence-in-sub-saharan-africa-ensuring-inclusivity/

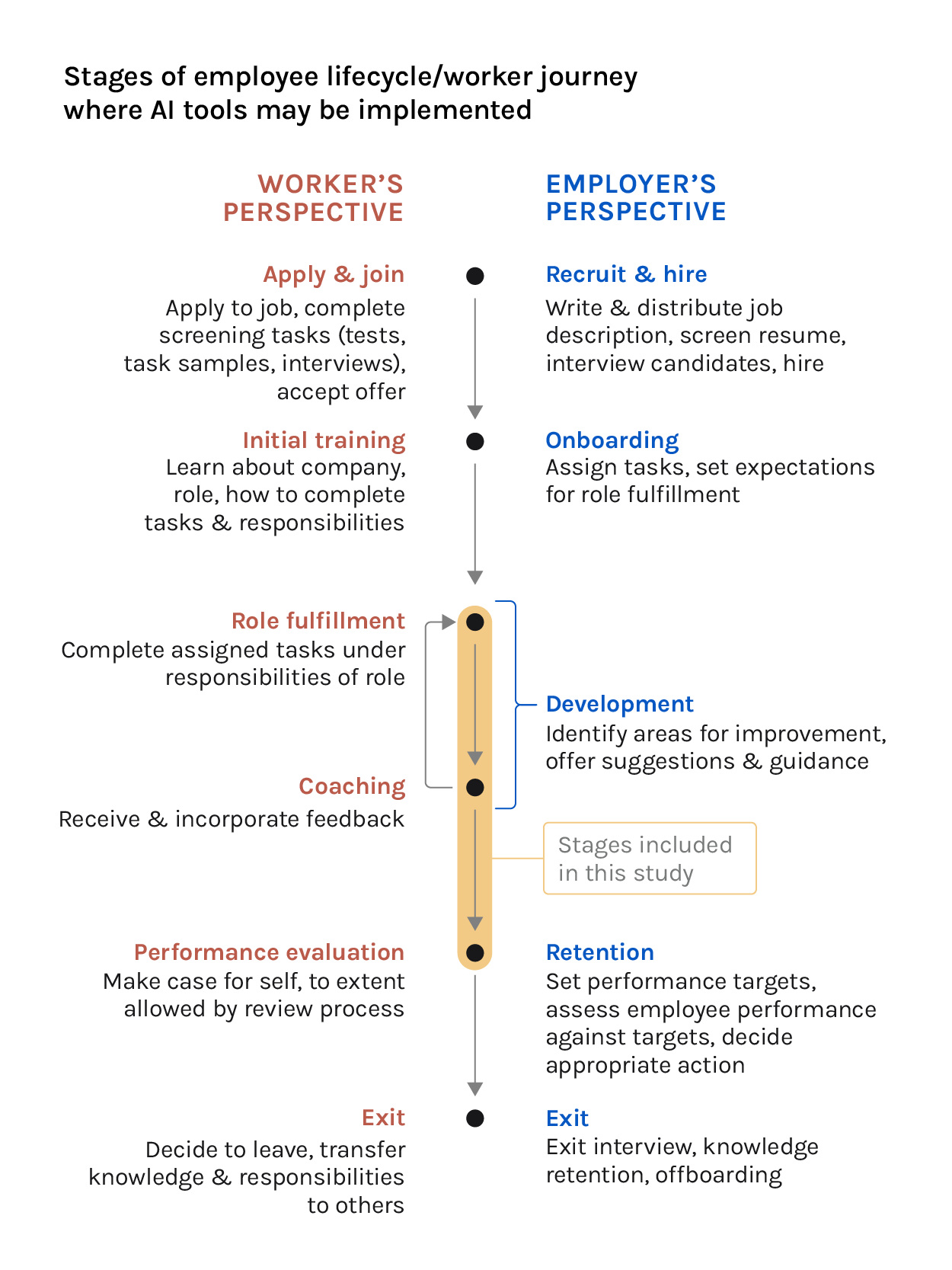

While workers may be affected by AI technologies across the “employee lifecycle,”Adapted from Qualtrics’ employee lifecycle model, “Employee Lifecycle: The 7 Stages Every Employer Must Understand and Improve,” Qualtrics, https://www.qualtrics.com/experience-management/employee/employee-lifecycle/ this research focuses on use cases where workers could directly observe AI in their workplace and thus share their experiences of it.In line with this approach, this report also discusses different technologies as experienced by the participating workers, in what might be thought of as the “worker’s journey” in a given job. As an illustration, a technology used by an employer to provide guidance to workers on their tasks is discussed as a worker’s coaching tool, not as a manager’s automation or decision-support tool.

Experiences of AI

Technologies used in other stages, such as AI recruitment or assessment tools for job candidates, pose their own risks to workers; for an overview of a number of these areas, see “Platforms at Work: Automated Hiring Platforms and Other New Intermediaries in the Organization of Work.”

An area that has seen substantial research is the ways these tools reinforce biases related to race, gender, age, class, and disability status. Compared to many aspects of workplace AI, this area has received greater attention from more traditional AI ethics areas, such as fairness, transparency, and accountability, as well as from major employers. For an overview of potential AI bias issues in hiring, see “All the Ways Hiring Algorithms Can Introduce Bias.”

For an effort undertaken by employers to address issues related to algorithmic bias in workforce decisions, see “Algorithmic Bias Safeguards for Workforce.”

For a tool to assess potential issues with automated hiring software, see “A Silicon Valley Love Triangle: Hiring Algorithms, Pseudo-Science, and the Questfor Auditability.”

Workers largely encountered the AI technologies described in this study in three stages of their jobs: in their fulfillment of their roles, to coach them on their work, or to evaluate their performance.

Who we learned from

Who we learned from

The occupations and locations selected feature workers representing a diversity of profiles. In India, offshore call center work is a stable, middle-class job often performed by college-educated workers fluent in a second language (in this case, English). Shifts are scheduled according to the needs of the outsourcing country, so workers often find themselves working night shifts, very early, or very late to match standard working hours in high-income English-speaking countries around the world. These hours are hard to navigate alongside family life; it is estimated that over 90% of people in these roles are under the age of 35.Mayank Kumar Golpelwar, Global Call Center Employees in India: Work and Life between Globalization and Tradition (Springer, 2015)

The data annotation workers in the sub-Saharan country where this research was conducted are similarly youthful. This work is often positioned as an entry-level job for those interested in the continent’s growing information technology industry. As educational requirements are less strict than those for offshore customer service workers in India, educational backgrounds are more varied. Most workers have at least a secondary school degree and many have gone on to take classes in or complete post-secondary or bachelor’s degrees.

Unlike in the other two sites, the demographic profile of warehouse workers in the United States is highly heterogeneous. The purpose of the work means worksites are distributed throughout the country rather than concentrated in a handful of cities or a region. Substantial skill or education requirements are uncommon for entry-level jobs in the industry. Given the US’s history of education inequality and the lack of access to quality education for many people of color, people of color are overrepresented in warehouse work in the US, making up nearly 60% of the industry workforce.Hye Jin Rho, Shawn Fremstad, and Hayley Brown, “A Basic Demographic Profile of Workers in Frontline Industries” (Center for Economic and Policy Research, April 2020), https://cepr.net/wp-content/uploads/2020/04/2020-04-Frontline-Workers.pdf

Substantial research and capital is being dedicated to automate the jobs covered in this research. Automation aspirations, however, are not automation realities.

Since the invention of automated retrieval systems in the 1950s, technology has enabled warehouse operators to process goods at ever-increasing speed. Yet more than 1.8 million people in the US work in the warehousing and storage industry today, more than 2.5 times the number a decade ago.U.S. Bureau of Labor Statistics. “All Employees, Warehousing and Storage.” FRED, Federal Reserve Bank of St. Louis. FRED, Federal Reserve Bank of St. Louis, July 2022. https://fred.stlouisfed.org/series/CES4349300001 Efficiency gains have lowered costs and increased consumer demand, but cost-effective, fully automated warehouses remain elusive for most goods. A similar narrative holds for call centers, with speculation about automating customer service since the creation of the ELIZA chatbot in the 1960s. Despite the conversion of recent natural language processing research into cutting-edge call center software, global demand for call center workers remains strong and the general public remains skeptical of fully automated customer service.Lee Rainie et al., “AI and Human Enhancement: Americans’ Openness Is Tempered by a Range of Concerns” (Pew Research Center, March 2022), https://www.pewresearch.org/internet/wp-content/uploads/sites/9/2022/03/PS_2022.03.17_AI-HE_REPORT.pdf Automation efforts often transform the tasks of jobs. But the demand for workers in both occupations continues, with no reliable endpoint in the near or medium term.

Data annotation is a comparatively new “job gained” from the rise of AI.James Manyika et al., “Jobs Lost, Jobs Gained: What the Future of Work Will Mean for Jobs, Skills, and Wages” (McKinsey Global Institute, November 28, 2017), https://www.mckinsey.com/featured-insights/future-of-work/jobs-lost-jobs-gained-what-the-future-of-work-will-mean-for-jobs-skills-and-wages It, too, is an automation target. As with the other jobs, automation is changing the content of the role, but demand for workers continues to grow, as increasingly sophisticated AI-modeling techniques demand new forms of data.Mary L. Gray and Siddharth Suri, Ghost Work: How to Stop Silicon Valley from Building a New Global Underclass (Houghton Mifflin Harcourt, 2019)

Furthermore, AI technologies similar to those in this report are proliferating throughout other jobs of all kinds. Without deliberate intervention, the decisions, incentives, and technologies underpinning the impacts discussed in this report will likely reproduce similar impacts for workers in other industries and locales. The occupations discussed here may not survive indefinitely — few jobs do. The aim of this report is not to protect specific jobs, but rather to address the interests of workers broadly (especially economically vulnerable workers) in the face of rapid technological change.

Participant recruitment

Participant recruitment✳Additional details on participant recruitment can be found in Appendix 2

Participants for this research were recruited in two ways:

India and US

We worked with a professional recruiter to identify interested and qualified candidates for the study. Participant groups for each site were then selected from these pools to create samples representative of the demographics of workers in those occupations in those locations.

Sub-Saharan Africa

Participants from the sub-Saharan Africa site work for a company that had developed a set of machine learning applications to assist their employees. This company was interested in better understanding its employees’ perspectives on the new software and the ways it has changed their work. The company facilitated introductions to a group of employees with experience using the software. The group could opt into the research. Participation was entirely voluntary. A strict firewall was implemented from the outset of the research to protect participant confidentiality and ensure they felt comfortable speaking freely about their experiences.

Though the primary research in this report is focused on formal sector workers, 60% of the world’s workers participate in the informal sector.International Labour Office. “Women and Men in the Informal Economy: A Statistical Picture (Third Edition).” International Labour Office, 2018. http://www.ilo.org/wcmsp5/groups/public/—dgreports/—dcomm/documents/publication/wcms_626831.pdf While few, if any, work directly with AI systems, AI is still transforming their work by changing informal labor market conditions.

Consider agriculture, where over 90% of the workforce is informal.International Labour Office. “Women and Men in the Informal Economy: A Statistical Picture (Third Edition).” International Labour Office, 2018. http://www.ilo.org/wcmsp5/groups/public/—dgreports/—dcomm/documents/publication/wcms_626831.pdf Globally, informal workers are two times more likely than formal sector workers to be members of the working poor and agricultural workers are more likely than other informal workers to be poor.OECD, and International Labour Organization. “Tackling Vulnerability in the Informal Economy,” 2019. https://www.oecd-ilibrary.org/content/publication/939b7bcd-en Many are sharecroppers and contract farmers who make deals with formally incorporated companies to grow specific quantities of specific crops over a given period of time. Historically, negotiations would take into account an informal worker’s accumulated knowledge of local soil and weather conditions, performance of past crops, prior market prices, and other factors. Informal farmers possess the type of experiential knowledge passed through communities and generations, which can be formidably accurate.James C. Scott, Seeing like a State: How Certain Schemes to Improve the Human Condition Have Failed, Yale Agrarian Studies (New Haven, Conn.: Yale Univ. Press, 2008) The companies, on the other hand, possess the type of technocratic knowledge built through the collection and increasingly sophisticated analysis of data.

With the introduction of AI, informal farmers’ ability to negotiate critical provisions has radically decreased. Many farmers now face take-it-or-leave-it offers to produce crops they’ve never seen grown and which may require a year or more of invested cultivation before producing sellable yields. The unprecedented nature of the offers means farmers lack experiential knowledge to base their decisions on, and contracting companies are not sharing the details underlying their proposals, creating sizable information asymmetries.

The experience of the Self Employed Women’s Association (SEWA) in India working with women farmers in the informal sector has revealed a lack of inclusive, quality data. The algorithms used by companies rely on data collected by researchers and economists. Informal sector workers, and in particular women, have not been included in the design of data collection tools or the data collection itself. The exclusion of their perspectives and knowledge raises questions about the usability, authenticity, and relevance of this data.

The contracts created with this data are increasingly non-inclusive, and transfer risks to poor smallholder farmers, pushing them deeper into a vicious circle of poor data representation, poor contracts, high risk, increased poverty, and ever-growing debt. There needs to be a substantial focus on including small and marginal women farmers in the data collection processes, resulting in transparent and inclusive data captured firsthand from informal sector workers.Reema Nanavaty, Expert interview with Reema Nanavaty, Director of Self Employed Women’s Association (SEWA), July 11, 2022