In November, PAI publicly launched the AI Incident Database (AIID), composed of more than 1,000 reports of AI failures that caused harms or near-harms. Stewarded by a representative of PAI Partner XPRIZE, the AIID serves as a much-needed tool for AI researchers and developers, outlining a wide variety of real-world risks for automated systems.

As a Vice News story covering the database’s debut put it, “the platform is being used to document and compile AI failures so they won’t happen again.”

Authorities like the Federal Aviation Administration have long maintained databases of bad outcomes for the benefit of their respective fields, but no systematic repository of harmful incidents previously existed for members of the AI community. The necessity of such a system has only become more urgent in recent years as AI increasingly enters safety-critical domains like healthcare, criminal justice, and transportation. To learn from the mistakes of the past, the past must first be chronicled — a goal the AIID was created to help achieve.

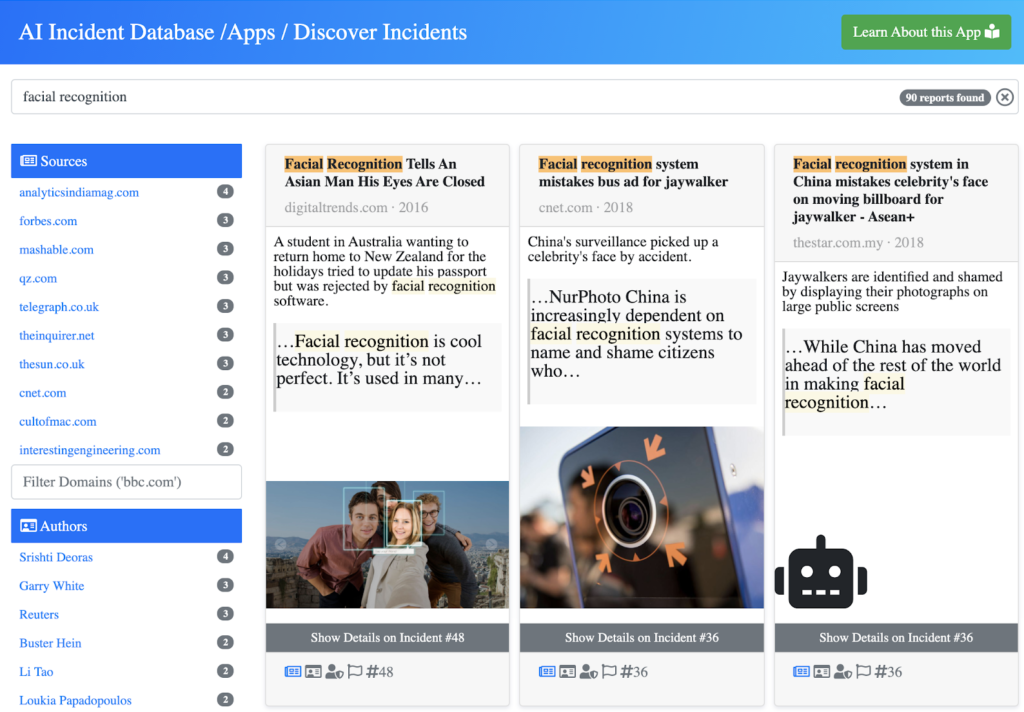

This project sprang directly from the needs of the PAI research community, which found there to be no prior resource offering a comprehensive overview of AI safety and fairness failures. Now, with the crowdsourced AIID, an AI researcher can type “policing” into the database’s search field and retrieve dozens of citable incident reports on the topic. Similarly, corporate product managers hoping to anticipate and mitigate risks could search the AIID for “translate” before launching a new translation service. They then might learn about Incident 72, when a social media user was reportedly arrested after his “good morning” message was automatically translated as “attack them.”

Created in response to a practical challenge and cultivated through contributions from the greater AI community, the AIID exemplifies the kind of novel solutions fostered by PAI’s collaborative approach to responsible AI. Solutions that ultimately work to the benefit of not just some, but all.

![$hero_image['alt']](https://partnershiponai.org/wp-content/uploads/2021/06/AI-incidents-big.png)