Studying AI Explanations to Improve Healthcare for Underserved Communities

![$hero_image['alt']](https://partnershiponai.org/wp-content/uploads/2022/06/healthcare-XAI-2080x960-1.gif)

Today, machine learning (ML) systems are increasingly being deployed in high-stakes domains, including healthcare, to make predictions. Consequently, it is more important than ever for people to be able to understand how these predictions are made, enabling them to contextualize the results and make fully informed decisions. This has led to the development of Explainable AI (XAI), AI systems that make their predictions more transparent to users. Previous research by Partnership on AI (PAI) and others, however, found that the majority of XAI deployments are for developers and engineers — not the end users who ultimately take action based on ML system results, although they may not necessarily have ML expertise themselves.

With that in mind, how can we build AI systems that provide end users with the information they need about how these systems work?

To begin answering this question, PAI partnered with Portal Telemedicina, a digital healthcare organization in Brazil, to study the implementation of AI in a real-world setting. Portal Telemedicina’s platform, which helps triage medical exams using an AI model before they are evaluated by a clinician, connects over 500 clinics to major hospitals. This offers a unique opportunity to evaluate the impact AI can have on people who do not have easy access to specialist healthcare.

Our research began with a multistakeholder interview study to determine what types of explanations are most relevant to different types of users (specifically, executives, ML engineers, and end users) when using AI systems for healthcare. Based on our findings, we then designed both an XAI system specifically for end users and a future study to evaluate the impact of such a system in the real world.

Building XAI that is useful in practice requires collaborative efforts that consider the needs of all stakeholders. Our study serves as a model for how this can be done. In this blog post, you will learn more about the importance of AI explainability, the results of our study, and how you can get involved in PAI’s future work with AI and healthcare.

Background

XAI systems have become increasingly popular as mechanisms for understanding predictions from ML systems. By providing insights into the inner workings of complex AI models, XAI systems could have a profound impact on human/AI interactions, improving collaboration and mitigating harms.

Given a trained ML model and a result from that model, an XAI system seeks to explain the rationale behind the model’s prediction of that result. This is especially important in healthcare and other high-stakes scenarios because ML models are not perfect and can (often) make mistakes. With appropriate explanations, end users can contextualize model results and combine them with their own domain expertise in order to make fully informed decisions.

Our study was based on an application-grounded evaluation: it investigated explanations for real users in the context of a real use case. As opposed to focusing on ML engineers (as much of the previous research on XAI has done), we instead focused on the technicians whose work is evaluated by the ML system — in this case, electrocardiogram (EKG) exams.

EKGs measure the heart’s electrical activity and are used by clinicians for diagnosing various heart conditions. Low-quality EKG exams are a problem because they prevent clinicians from being able to diagnose patients accurately. In Portal Telemedicina’s current workflow, low-quality exams are only discovered at the end of the pipeline when clinicians find they are unable to read the exams forwarded to them due to quality issues. At this point, the patient has often already gone home and therefore must return to the clinic in order to redo the exam. This is why Portal Telemedicina designed an AI system: to flag low-quality EKGs in real-time so that the exam can be redone right away and patients do not have to return to the clinic.

Our hypothesis was that if the system were to include an explanation in addition to a real-time low-quality flag, it would aid technicians in determining how to fix the low-quality issue. This is an important use case because of its impact on underserved communities: for patients who live in remote regions, it can be difficult to come to a clinic even once, let alone twice, so it is important that patients have access to quality medical care with only one trip to the clinic.

Testing this hypothesis required us to answer two key questions for XAI in practice:

- In this specific context, what types of explanations are most appropriate for different types of stakeholders, including end users?

- How can we evaluate the utility of explanations for both increasing usability and building trust in the system?

Findings

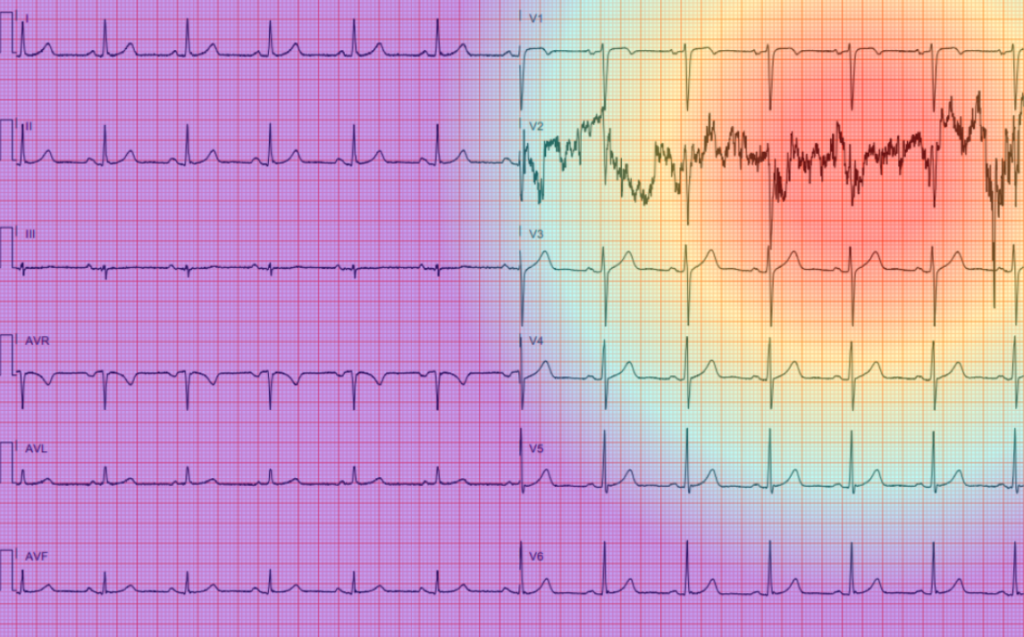

To answer the first question and understand the explainability needs and goals of different groups in this use case, we conducted an interview study with three types of stakeholders: company executives, ML developers, and end users (i.e, the technicians performing EKG exams). Eight main themes — including “improving outcomes,” “trust in the system” and “explanation suitability” — emerged from these interviews, which are explored at greater length in our paper. Ultimately, our findings suggested that saliency maps (which use colors to highlight which part of an image is most important) would be the most useful type of explanation for technicians for understanding why the ML system is flagging their exams as low-quality.

A mockup of a saliency map explanation for an EKG exam that is predicted to be low-quality. Red highlights the part of the image most important to the model’s prediction, while purple indicates the least important area.

Based on this finding, we designed a technician-facing interface for using saliency map explanations. We then designed a future study to measure, in practice, the effect of these explanations on the workflow of Portal Telemedicina technicians. In order to evaluate the system as it would exist in the real world and evaluate if the system results in technicians performing better EKG exams over time, we opted for a longitudinal setup for this study.

What’s Next for PAI and the Health AI Community

In this work, we followed the three-step approach recommended by PAI’s previous research for providing explanations to end users: 1) identify stakeholders, 2) engage with each stakeholder, and 3) understand the purpose of the explanation. Our study provides an example for how to generate AI explanations that are both context- and stakeholder-specific as well as an experimental setup for evaluating such explanations. This work can be used as a framework for generating and evaluating explanations for other stakeholders in high-stakes contexts.

PAI, along with The MITRE Corporation and Duke University, has recently been awarded a grant from The Moore Foundation to explore relevant topics in Health AI. We are currently hosting a series of facilitated sessions to approach this discovery exercise through convenings and conversation. We are looking for folks working in the space to participate with us. Please join our Health AI Community by opting into our mailing list here. To learn more, please contact Christine Custis at christine@partnershiponai.org.